Get Started

Core Concepts

Utilities

Integrations

Guardrails

copy markdown

Interfaze provides configurable content safety guardrails. It allows you to automatically detect and filter potentially harmful or inappropriate content in both text and images, ensuring your applications maintain appropriate content standards.

The guard system uses a comprehensive set of safety categories to evaluate content and can be customized to match your specific requirements and compliance needs.

Guard Categories

The LLAMA Guard system supports the following safety categories:

- S1: Violent Crimes

- S2: Non-Violent Crimes

- S3: Sex-Related Crimes

- S4: Child Sexual Exploitation

- S5: Defamation

- S6: Specialized Advice

- S7: Privacy

- S8: Intellectual Property

- S9: Indiscriminate Weapons

- S10: Hate

- S11: Suicide & Self-Harm

- S12: Sexual Content

- S12_IMAGE: Sexual Content (Image)

- S13: Elections

- S14: Code Interpreter Abuse

How to Enable Guardrails

To enable content safety guardrails, include the guard configuration in your system prompt using the following format:

Example System Prompts

Basic Safety Guardrails:

Comprehensive Content Filtering:

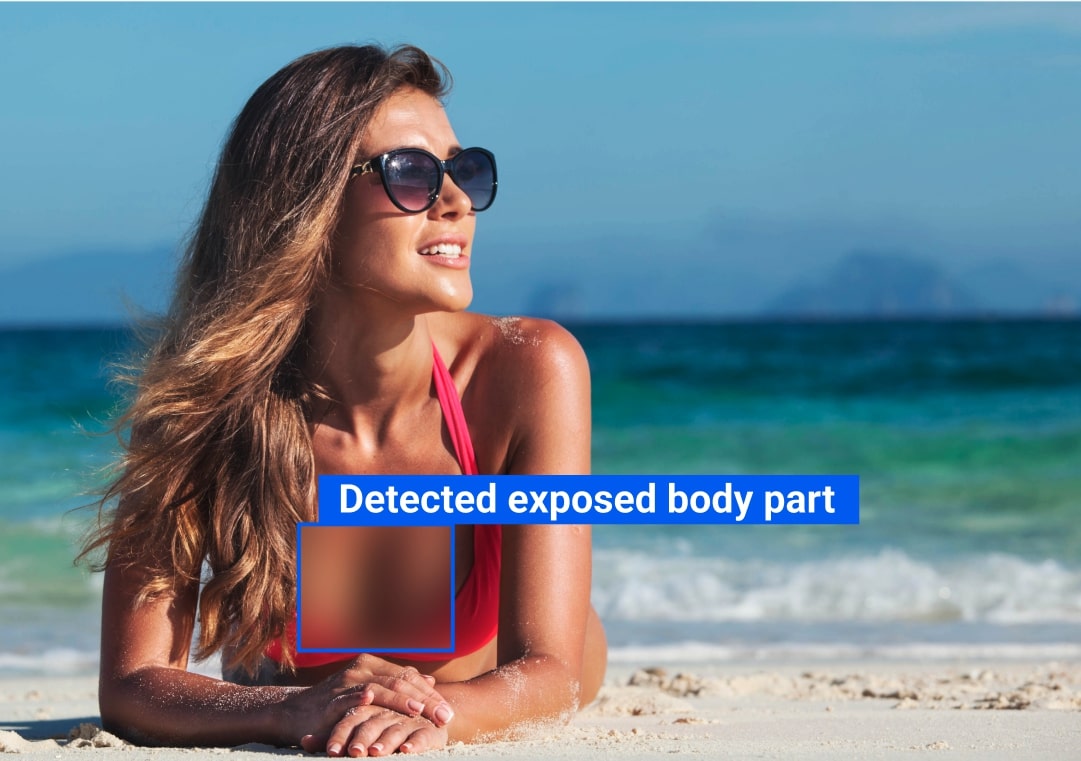

Image NSFW Detection:

Custom Combination:

Guard Response

When guardrails are triggered, the system returns a structured response indicating which safety category was violated:

Implementation

Text Content Guarding

When guardrails are enabled, the system automatically evaluates all text content against the specified safety categories. If content violates any enabled guard, the request will be blocked with an appropriate error message.

Image NSFW Detection

For image content, use the S12_IMAGE guard to automatically detect and filter NSFW content. The system will analyze uploaded images and block requests containing inappropriate visual content.