Introducing the Structured Output Benchmark (SOB)

copy markdown

LLMs are increasingly deployed to produce structured data from unstructured and semi-structured sources, parsing invoices, medical records, meeting transcripts, and converting PDFs to database rows.

For deterministic output, the next step in a workflow reads a specific key and expects a specific type. A hallucinated invoice_total or an array ordered incorrectly because of inaccurate date values silently breaks downstream systems. Yet existing benchmarks either check schema compliance alone or evaluate value correctness within a single source domain.

Top 5 at a glance

A side-by-side look at the top 5 models across all seven metrics. The structural metrics (JSON Pass, Path Recall, Structure Coverage, Type Safety) cluster near the ceiling for every model, while Value Accuracy and Perfect Response separate them.

The problem with current structured output benchmarks

Most benchmarks collapse "structured output quality" into a single number: does the response parse, and does it validate against the schema? That's necessary, not sufficient.

| Problem in current benchmarks | What it misses |

|---|---|

| Schema compliance as the only metric | A model can emit perfectly valid JSON with wrong values and score 100% |

| Single-source inputs (text only) | Real systems extract from OCR, screenshots, meeting audio, and PDFs, not just clean text |

| No difficulty weighting | Medium and hard schemas are scored identically, hiding which models actually handle nested structure |

| No separation of parse / structure / value errors | You can't tell if a model failed at JSON, at the schema, or at the facts |

| Reasoning / chain-of-thought blended in | Results measure reasoning + extraction together, not the extraction capability itself |

References to existing benchmarks: JSONSchemaBench | StructEval | DeepJSONEval | LLMStructBench

How SOB works

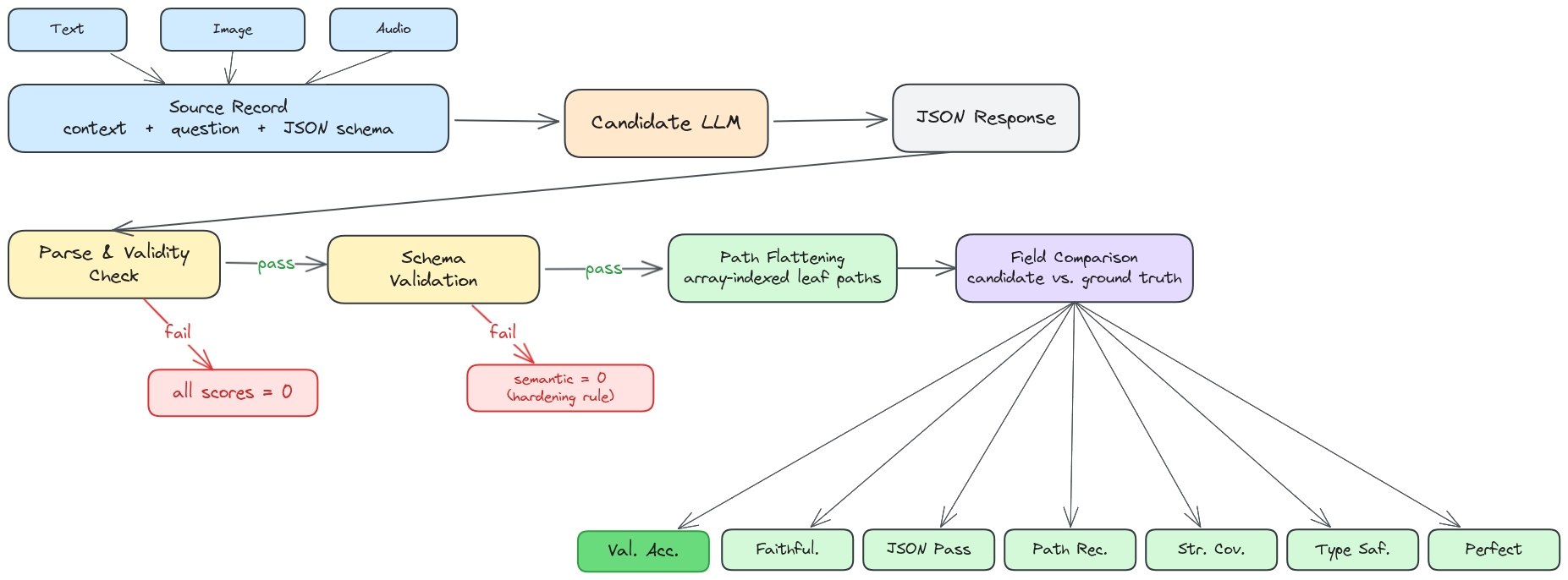

SOB evaluates structured output across three modalities using the same scoring harness. The goal is to isolate the extraction capability from every other ability a model has.

Three sources, one scoring pipeline

| Modality | Source dataset | Eval records |

|---|---|---|

| Text | HotpotQA context passages | 5,000 |

| Image | olmOCR-bench documents | 209 |

| Audio | AMI Meeting Corpus conversations | 115 |

Every record is paired with a JSON Schema and a ground-truth answer that was verified against the source context through human authoring with an LLM cross-check, so a missing or hallucinated value is unambiguously wrong.

To isolate the structured-output capability from vision and ASR quality, image and audio records are converted to text-normalized context before scoring. Models see the same modality-stripped context, and the differences that remain are attributable to how they handle schemas, nesting, and value grounding under different content distributions.

Seven metrics, not one

SOB reports seven metrics per record so you can see exactly where a model fails:

| Metric | What it measures |

|---|---|

| Value Accuracy | Exact leaf-value match against the verified ground truth (primary) |

| JSON Pass Rate | The response is parseable JSON |

| Type Safety | All leaf values match the declared JSON Schema types |

| Structure Coverage | The response includes the required object/array structure |

| Path Recall | All required JSON paths (keys) are present |

| Faithfulness | Values are grounded in the source context, not hallucinated |

| Perfect Response | Every leaf value is exactly correct for the full record |

Value Accuracy is the metric that matters for production. It's the share of fields a downstream system can trust without a human review step.

Scoring gates

Two gates prevent inflated scores from schema-only wins:

- Hardening gate: If JSON parse fails, downstream semantic metrics are zeroed for that record.

- Coverage gate: Value Accuracy is only credited on fields the model actually returned, with missing paths counting as wrong.

Schemas are tagged between easy, medium or hard. The final leaderboard is schema-complexity-weighted (easy = 1.0, medium = 2.0, hard = 3.0) so hard schemas contribute more to the ranking than medium ones.

The results

We ran SOB on all models at temperature 0.0, max output 2048 tokens and no reasoning/thinking wherever the provider allows it, so the score reflects pure structured output and extraction capability.

Unified leaderboard

| Rank | Model | Overall | Value Acc | Faithfulness | JSON Pass | Path Recall | Structure Cov | Type Safety | Perfect |

|---|---|---|---|---|---|---|---|---|---|

| 1 | GPT-5.4 | 0.870 | 0.798 | 0.869 | 0.993 | 0.988 | 0.981 | 0.993 | 0.469 |

| 2 | GLM-4.7 | 0.861 | 0.804 | 0.868 | 0.965 | 0.959 | 0.957 | 0.965 | 0.508 |

| 3 | Qwen3.5-35B | 0.861 | 0.801 | 0.863 | 0.969 | 0.962 | 0.960 | 0.969 | 0.500 |

| 4 | Gemini-2.5-Flash | 0.860 | 0.796 | 0.856 | 0.972 | 0.967 | 0.961 | 0.972 | 0.498 |

| 5 | Qwen3-235B | 0.857 | 0.786 | 0.854 | 0.978 | 0.970 | 0.968 | 0.978 | 0.463 |

| 6 | Interfaze-Beta | 0.855 | 0.795 | 0.858 | 0.967 | 0.962 | 0.957 | 0.967 | 0.480 |

| 7 | Claude-Sonnet-4.6 | 0.854 | 0.779 | 0.858 | 0.979 | 0.975 | 0.969 | 0.979 | 0.442 |

| 8 | GPT-4.1 | 0.850 | 0.783 | 0.853 | 0.969 | 0.963 | 0.959 | 0.969 | 0.454 |

| 9 | GPT-5 | 0.849 | 0.769 | 0.859 | 0.983 | 0.978 | 0.972 | 0.983 | 0.398 |

| 10 | Gemma-3-27B | 0.847 | 0.777 | 0.842 | 0.969 | 0.961 | 0.958 | 0.969 | 0.454 |

| 11 | Qwen3-30B | 0.842 | 0.753 | 0.832 | 0.983 | 0.974 | 0.970 | 0.983 | 0.401 |

| 12 | Nemotron-3-Nano-30B | 0.841 | 0.747 | 0.817 | 0.987 | 0.975 | 0.971 | 0.987 | 0.400 |

| 13 | GPT-5-Mini | 0.835 | 0.751 | 0.837 | 0.972 | 0.966 | 0.960 | 0.972 | 0.388 |

| 14 | Gemma-4-31B | 0.833 | 0.778 | 0.843 | 0.943 | 0.934 | 0.934 | 0.943 | 0.461 |

| 15 | Gemini-3-Flash-Preview | 0.833 | 0.773 | 0.831 | 0.939 | 0.935 | 0.929 | 0.939 | 0.484 |

| 16 | Schematron-8B | 0.832 | 0.731 | 0.807 | 0.987 | 0.976 | 0.969 | 0.987 | 0.370 |

| 17 | IBM-Granite-4.0 | 0.832 | 0.736 | 0.812 | 0.983 | 0.965 | 0.967 | 0.983 | 0.381 |

| 18 | Phi-4 | 0.831 | 0.787 | 0.849 | 0.969 | 0.961 | 0.961 | 0.969 | 0.452 |

| 19 | DS-R1-Distill-32B | 0.827 | 0.747 | 0.819 | 0.960 | 0.945 | 0.947 | 0.960 | 0.411 |

| 20 | Ministral-3-14B | 0.778 | 0.700 | 0.773 | 0.906 | 0.898 | 0.896 | 0.906 | 0.368 |

| 21 | GPT-OSS-20B | 0.732 | 0.667 | 0.730 | 0.845 | 0.838 | 0.836 | 0.845 | 0.362 |

The top four are within 1 point of each other on overall score, but swap freely across individual metrics. Rank order is metric-specific, not absolute.

Per-metric charts

Each chart re-sorts all 21 models on that single metric, so you can see which models win each category (not just the overall average).

To expose the gaps, each chart's x-axis starts from a floor appropriate to that metric (e.g. 60% for Value Accuracy, 80% for JSON Pass). Without that, the top cluster looks identical.

Value Accuracy

The metric production systems care about. Note how tightly the top cluster sits compared to the overall leaderboard spread.

Faithfulness

How often values are grounded in context instead of hallucinated.

JSON Pass Rate

Almost every modern model clears 95%+ in the unified leaderboard. This is why a pass-rate-only benchmark can't separate them anymore.

Path Recall

Whether all required keys appear in the output.

Structure Coverage

Whether nested objects and arrays are present with the correct shape.

Type Safety

Whether leaf values respect the declared JSON Schema types (no strings where numbers are expected).

Perfect Response Rate

The fraction of records where every single leaf value is exactly right. This is the hardest metric and collapses to roughly half even for the best models.

The JSON-pass vs Value-Accuracy gap

The single most important view: most models clear 95%+ on JSON Pass, but Value Accuracy sits 15 to 30 points lower. That gap is the space where structured output benchmarks have been lying to us.

The gap column is the headline. Every model on this list passes JSON parsing 97%+ of the time, but actual leaf-value extraction drops by 17 to 26 points. Qwen3.5-35B has the tightest gap (16.8) and the highest Value Accuracy on the list, while Schematron-8B passes JSON 98.7% of the time but lands the lowest Value Accuracy at 73.1% — a 25.6 point fall.

| Model | JSON Pass | Value Accuracy | Gap |

|---|---|---|---|

| GPT-5.4 | 99.3% | 79.8% | 19.5 pp |

| Nemotron-3-Nano-30B | 98.7% | 74.7% | 24.0 pp |

| Schematron-8B | 98.7% | 73.1% | 25.6 pp |

| GPT-5 | 98.3% | 76.9% | 21.4 pp |

| Qwen3-30B | 98.3% | 75.3% | 23.0 pp |

| IBM-Granite-4.0 | 98.3% | 73.6% | 24.7 pp |

| Claude-Sonnet-4.6 | 97.9% | 77.9% | 20.0 pp |

| Qwen3-235B | 97.8% | 78.6% | 19.2 pp |

| Gemini-2.5-Flash | 97.2% | 79.6% | 17.6 pp |

| GPT-5-Mini | 97.2% | 75.1% | 22.1 pp |

| Qwen3.5-35B | 96.9% | 80.1% | 16.8 pp |

| Gemma-3-27B | 96.9% | 77.7% | 19.2 pp |

Modalities diverge more than we expected

The same model scores very differently across text, image, and audio, even when every model gets the same text-normalized context. Audio is the hardest by far. The transcripts are long (~7,300 tokens on average) and full of overlapping speakers, so models struggle to pull out the right values.

Best Value Accuracy by modality across all valid models:

| Modality | Best Value Accuracy | Leader |

|---|---|---|

| Text | 83.0% | GLM-4.7 |

| Image | 67.2% | Gemma-4-31B |

| Audio | 23.7% | Gemini-2.5-Flash |

No single model wins all three. GPT-5.4 ranks 3rd on text but 9th on images. Schematron-8B ranks 19th on text but 10th on images. Gemma-4-31B ranks 11th on text but 1st on images.

Seven patterns worth internalizing

- Valid JSON ≠ correct JSON. JSON Pass and Value Accuracy diverge by 15 to 30 points on every frontier model.

- Structural metrics mask value errors. Path Recall / Structure Coverage / Type Safety can all read ~99% while 20 to 30% of leaf values are still wrong, and Perfect Response collapses to about half even for the top models.

- Model size is not a predictor. Qwen3.5-35B and GLM-4.7 beat GPT-5 and Claude-Sonnet-4.6 on Value Accuracy. Phi-4 (14B) edges out GPT-5 and GPT-5-Mini on text.

- Structured hallucinations are the hardest bug. The value is type-correct, schema-valid, and plausible, so it slips through most guardrails. On one audio record the ground truth is

"target_market_age": "15 to 35 years"and a model returns"25 to 35"— invisible without field-level checks. - Modalities don't transfer. Text-trained structured output behavior degrades sharply when the source is a transcribed conversation. Best Value Accuracy drops from 83.0% on text to 67.2% on images to 23.7% on audio.

- Rankings shift across modalities. GLM-4.7 leads text, Gemma-4-31B leads images, Gemini-2.5-Flash leads audio. No single model dominates all three, so a text-only leaderboard would mask the gaps.

What's next

SOB is a first step, not a finish line. We'll keep growing the benchmark along several axes:

- More datasets, including newer datasets with increasing complexity and difficulty.

- More schemas and difficulty tiers, including recursive types, unions, and large enum spaces.

- Continuous re-evaluation as new models ship, and ongoing transparent tracking of our own models against the same scoring harness so we can measure ourselves honestly.

Why we released SOB?

Our goal is to be the best general model for deterministic tasks and a key aspect of determinism is controllable and consistent output structure. The first step to making structured output better is to measure it and hold ourselves against the best.

- Docs: Structured Output in Interfaze

- Playground: Try structured output on Interfaze

- Paper: arXiv preprint

- Dataset: Hugging Face